I have been working on the FantasySP redesign for over a year before it officially launched on May 20th 2018. The redesign was something I first thought of doing over 3 years ago, but I kept putting it off due to working on other features and the sheer size of the project.

I knew it could not be delayed any longer after the announcement by Google for their mobile-first index. I thought I could have it up before this all went into effect, but then the news broke that their mobile-first index was going live in March.

Prior to the redesign, I had a mobile site and a desktop site. Each had its own codebase, both front-end and backend. Yes, you heard right. Not only that, but the mobile site didn’t have ALL of the features of the desktop site and some were stripped down versions of the desktop site.

The mobile site was so old, that it used tables because that is what you still used when mobile sites were gaining popularity for maximum compatibility.

The initial codebase for the mobile site was pre Bootstrap and pre front-end frameworks. It was back when Digg was the shit, so I decided to use their design for mobile FantasySP. It was back when responsive layouts didn’t exist yet and a separate mobile site was the only real option.

I also knew the task at hand was monumental and I knew I needed help for two reasons. First, I knew a professional designer would create something better than I could. Second, I knew this would speed up the process.

I contacted Ryan Scherf, and we narrowed down a framework (Bootstrap), a new color scheme, and started to tackle a few pages. Ryan and I worked back and forth from about January of 2017 to May of 2017.

To call this simply a ‘redesign’ is an injustice. It’s way more than just a facelift since every piece of code is being touched behind the scenes. This is basically a relaunch of FantasySP, and I’ll get into more about that in a bit.

Learning to Ride Again

The thing about web development is that you are always evolving and constantly learning new technology. Switching to Bootstrap v4 meant ditching floats and moving to flexbox.

Flexbox in itself is a huge change and a huge learning curve, and I still need to use a cheat sheet but I am getting better with it every day. Basically, I’ll be using flex instead of using floats for layout positioning,

Not only that, but Bootstrap itself has its own laws and ways of doing things that I had to learn. (Yes, it’s true, I never used Bootstrap before this) Needless to say, having a designer come up with the Bootstrap theme was monumental into getting started off on the right foot.

On top of Flexbox and Bootstrap I needed to learn the modern way to develop on the front-end which meant adding Gulp and Bower into the mix. (Though do not use Bower if you are starting out now, use Yarn instead.)

Ryan pointed me in the right direction so I could get started and get everything working.

Keep in mind that I didn’t even start the real work yet. This is the work before the work. I had to learn 3 brand new things that were pretty damn challenging before things even got off the ground.

My goal was to get a variety of pages designed to get a base foundation to work off of, and I would spend the time to fill in the gaps and create pages based off of those core pages.

Slowly but surely I was getting the hang of the new workflow, and I could start taking Ryan’s designs and create new pages and tweak the layouts he had already given me.

Starting Over

The redesign meant that I was starting over. Everything was going to be re-written from the ground up. If I am doing this redesign I am going to do it justice.

The new FantasySP will be fast and customizable. It will be lean (as best as I could). And it was going to be as future proof as I could possibly make it.

This meant all of my javascript functions had to be looked at and most had to be recoded. This meant basic things like autocomplete, pop-up boxes, front-end data checks, table sorting all had to be researched. Some of it Bootstrap would handle, but many of them had to be included as extra javascript libraries.

From now on I would have one file for CSS and one file for JS and that was it.

It also meant I had to redesign the billing page and implement the newest version of Stripe on both the frontend and the backend. I was using code from 2011 and it was starting to give me problems on occasion.

I wasn’t going to try and reinvent the wheel. Honestly, I don’t have time to relearn EVERYTHING. I’m not switching to MEAN and ditching my stack but I will modernize it. (If you are looking for benchmarks, you’ll find them at the end of this article, but I’m not spoiling it here.)

And yes, I would still be using jQuery. I’d still be using nginx. I’d still be using PHP 7.x…. commonly referred to as the LEMP stack. You may not like the stack, and hate PHP but that’s because your an idiot. 🙂

Speaking of PHP, all of my code had to be looked at and either re-written or tweaked in order to fit into a unified codebase for a responsive layout.

Like I said, this task was daunting.

The Actual Design

Now that some of the geeky stuff is out of the way, let’s actually talk about the design. There are a few things I knew I wanted the new design to have:

- A new color because red reminds users of ESPN

- A better top navigation

- A dedicated section for user navigation

- awesome player popups

- reimagined fantasy tools

- obviously be responsive

Once we figured out the colors and top navigation, the real work on the design could start. My wife was the one to narrow down that the color orange would work for the site, and Ryan picked the perfect shade to work with.

The day I got really excited about this redesign was when work began on the reimagined start/sit tool. One of the first mockups looked like this.

A good start, but I wanted the top section to pop colorwise. It needed something to make it stick out that I thought was missing.

Based off that feedback, he completely nailed the revamped design and is something I would have never come up with on my own.

One last example I’ll show are the early mockups of the Player Page and Player Popups.

I needed a much better way to organize the chaos I had going on with the player pages, and I am pretty sure the first thing I got back was almost exactly what I used in my final design.

I didn’t provide much insight into what I was looking for other than please organize it all, and allow for a bunch of different boxes to the right side that I could work with.

As soon as I saw it, I could not wait until this thing went live. It looked light years better than what was already on the site

The first player popup design that we started with was great right off the bat, but much like the start/sit tool, I wanted a bit more color to the header.

Which then turned into this:

The live popup as of today looks like this:

Once Ryan’s design job was done, it was time to get a good sampling of live pages to turn into real HTML/CSS. I had no idea how to formulate a bootstrap theme, so I really needed to see how it was supposed to be done.

I would take what he gave me, which was the core styling of FantasySP and a few pages and then come up with designs for the rest of them. I think one of the first designs I converted into CSS/HTML was the player popup and boy oh boy did that take me a long time.

This was when I had to really buckle down and learn the ins and outs of Bootstrap and flex positioning. Needless to say, it was not fun and I felt lost for a few weeks. I still kinda get lost with flex positioning because it is just so different.

Once the rest of the designs were turned into CSS I finally had enough material to create the beta subdomain and start turning these HTML/CSS pages into actual working pages with live data.

It turns out, that day happened to be May 17th 2017. As you can see, all I had was a hello world.

At this point I would be starting over in terms of all of the backend logic for PHP and would start from scratch. In some cases, I would take my old PHP code and convert it to work with the new layouts. It was a major milestone, but my work was far from over.

From this point, it was still another year before I officially launched the new FantasySP.

In June of 2017, I finally had enough of the beta site up and running to open it up for users to start trying. From that point on, I tackled each page and tool and kept grinding away.

Redesigning the Fantasy Assistant, Trade Analyzer, Draft Genius, billing, etc were extremely time consuming and very challenging but totally worth it once I was finished.

The Fantasy Assistant itself probably took me at least a month on its own because of the sheer size of it, plus for the first time it had to work on mobile.

Time for Stats

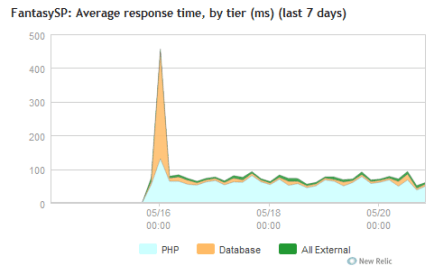

Like I mentioned earlier, the redesign was supposed to be much faster than the original site. Paying members will get an experience even faster because no advertisements are shown.

The numbers do not lie.

Front end load times are measured based on what percentage of load times are considered Satisfied, Tolerating, and Frustrating. This data is taken from New Relic.

ORIGINAL: Satisfied: 53%, Tolerating: 40%, and Frustrating: 7%

NEW DESIGN: Satisfied: 80%, Tolerating: 18%, Frustrating: 3%

According to Google Analytics, average page load times went from a range of 5 – 15 seconds, all the way down to a much more narrow range of 4.5 to 6 seconds.

Wrap-Up

I feel like this post is getting too long so I am going to end it here. Hopefully this post helped you visualize the long journey that I had to navigate. Of course, I am nowhere near done in terms of optimizations.

I’d love to go into optimization techniques I’ve used on this redesign but most of them are widely known these days for frontend development… it’s mostly a matter of just implementing them.

I still need to upgrade to PHP 7.3 and upgrade nginx and enable the pagespeed module but there is plenty of time to get to those over the next few weeks. I will make a new blog post detailing those changes and how it affected performance and the people behind the scenes that helped me.

For now though, I am extremely happy with the speed, the design, and the response from my users. Do yourself a favor and check out the brand new FantasySP yourself.

After roughly a year and a half since I started the redesign, it went live without any downtime and just a few minor bugs that needed to be fixed.

Now that this redesign is finished, I can implement features much faster since I am working with one unified codebase. Thanks for reading!